I'm pleased to announce that pixels has finally been published. This crate is my attempt to be the easiest way to create a hardware-accelerated pixel frame buffer. The stated goals and comparison with similar crates can be found in the readme. Below is a discussion of use cases where I imagine pixels will fill an existing need.

TL;DR: It puts pixels on your screen.

Emulators

I wrote a CHIP-8 interpreter (technically not an emulator, but alas) yesterday using pixels and it was quite pleasant. At least, putting pixels on the screen was effortless. I'm not convinced that CHIP-8 is a great target for anything.

Here's a screenshot of the interpreter (running on macOS) running the simple CHIP-8 test program:

Just look at those big, chunky pixels! This is a 1-bpp display with a resolution of 64x32 pixels. In this screenshot, the display is scaled (by the GPU) to 50x the original size, just to give you some perspective.

Emulators are a great use case for a pixel buffer. It's easy enough to setup a textured quad and stream it to the GPU. That's what pixels does.

Software Rendering

Have you ever wanted to write your own software rasterizer, ray tracer, or ray caster? Plenty of excellent tutorials exist for the subject. My personal favorite is the tiny* series, but there's also the In One Weekend series, specific to ray tracing.

Sure, you could just save your bitmap to an image file and you could open it in an image viewer. But what's the fun in that? You have the technology to put that bitmap directly on your screen without the middleman. In fact, you could go all crazy with it and show your rendering progressively evolve at 144 frames per second. ![]()

2D Animations

Remember HTML5 canvas? Oh I do, fondly! pixels is a bit like <canvas>; it gives you some area to draw on. But it is not like the complementary CanvasContext2D API. You'll need another crate for 2D drawing primitives. Something like piet or raqote maybe. But to be honest, you probably want a GPU rasterizer for vector graphics, so pixels may not be the best choice for this use case. On the other hand if you used CanvasContext2D to poke color values directly into a byte array, you really do want pixels!

Prototyping Games

Finally, the most fun use case, in my opinion. I am a fan of rapid prototyping. And sometimes the fastest way to get something on screen is to just do pixel blits the old fashioned way. pixels is an excellent fit for rapidly prototyping. I mentioned earlier that it took me less than a day to get a working CHIP-8 interpreter. But I have been spending the great majority of my time working on a game using pixels, rather than needing improvements to the library itself.

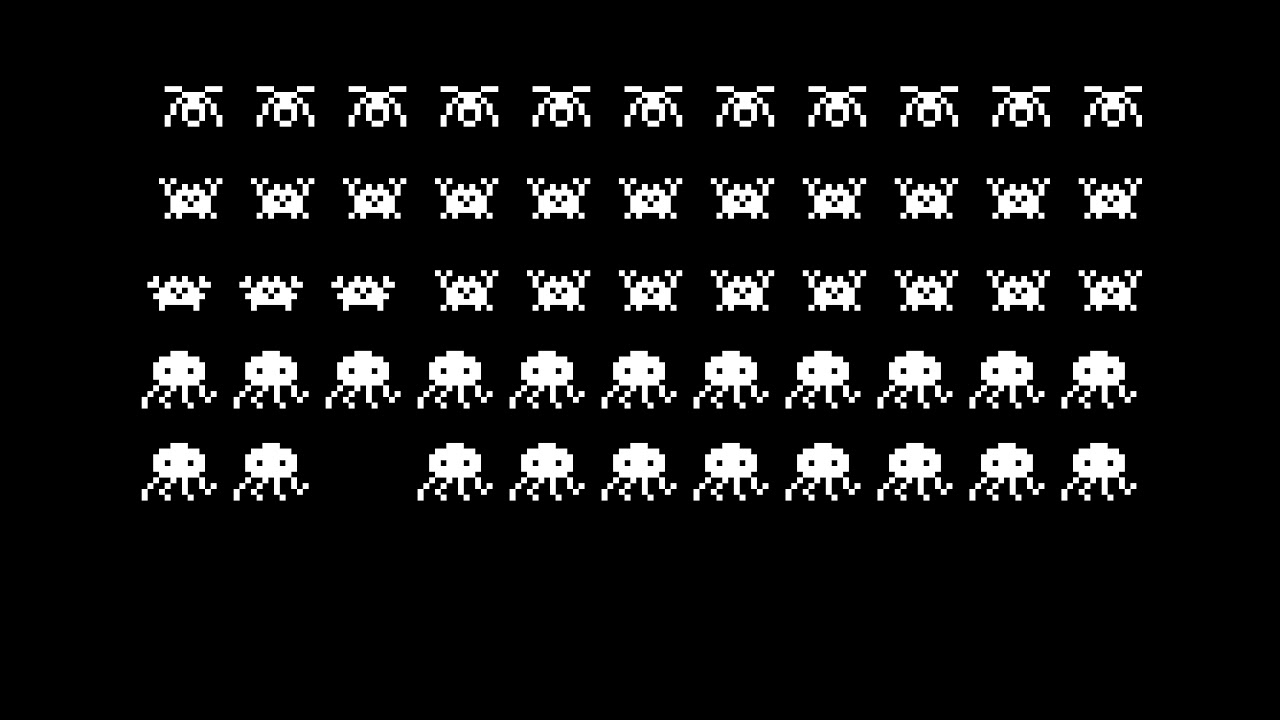

The game is a Space Invaders clone. Originally I was going for an authentic look and feel. And somewhere along the way I decided to spice it up a bit:

It's a work-in-progress. ![]()

And yeah, that's Ferris ... ![]() Being all invadery and stuff.

Being all invadery and stuff.

More Info

A quick detour to discuss what pixels is not. It doesn't want to handle windowing, event loops, user input, audio, game engine stuff like scene management or ECS, 2D drawing primitives, image formats, or sprites.

It's a "Bring Your Own Window Management" crate through and through. Some developers like SDL2, some like Winit, some like GTK, some like Qt, some like Allegro (for some reason?), some like just sticking with native OS-provided APIs. And pixels doesn't care how you create a window! It only asks that the window manager implements raw-window-handle, and everything else will Just Work ![]() .

.

And in case you noticed that everything I've shown so far is black and white; yeah, I know. Both examples I decided to make with the crate are based on systems from the 1970s, before color was invented. (I hear the world looked pretty drab at the time.) Just a coincidence, I assure you; pixels will happily display any texture format that wgpu supports (which is a lot).

WIP Features

Two things are currently not working as intended; multi-stage render passes and non-square pixel aspect ratios.

The non-square pixel aspect ratios feature is something that I supported in my [unreleased] attempt at providing a hardware-accelerated pixel buffer two years ago with gfx pre-ll. This is the kind of thing you want for emulating old systems like NES and Genesis/MegaDrive, whose screen resolutions are wildly misrepresented with square pixels. But this feature depends on multi-stage render passes...

The API is designed to allow multiple render passes to be added during the build phase. Conceptually pixels will pass the output texture from the previous stage as the input texture for the next stage. And it would allow for some great shader effects to be added to otherwise just a simple textured quad.

The obvious thing to do is adding a CRT shader with some barrel distortion, and an NTSC shader for fuzzy pixels that bleed into one another. But also it could be used for general special visual effects, like glowing lasers, wild implosions that distort the area around them, and other frilly fun stuff to make your games look amazing. I believe this is the true power of a hardware-accelerated pixel buffer that you cannot get out of a software pixel buffer. But it remains to be seen just how well it can work in practice.

And with that, pixels is up for grabs! Please feel free to provide any feedback, express concern or criticism, or just to share something you make with it. I'll be monitoring this thread, so this is a good place to talk about all things pixels. Thanks very much for reading to the end! You're the real hero.